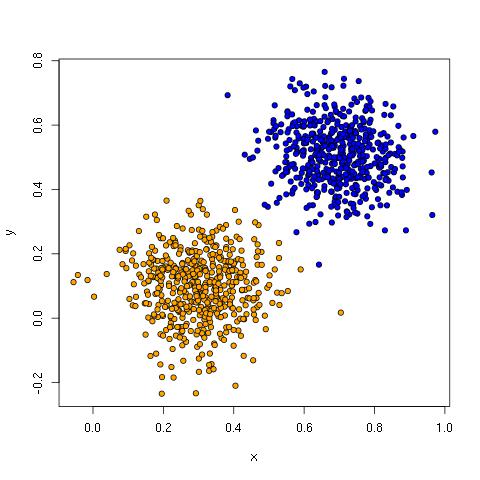

The united colors of Baldridge: my hand, my wife’s hand, and our boys’ hands.

This a long and personal post about racism in the USA. It’s an outpouring of some of what I’ve felt this past year, with an appeal for us all, whether white, black, Hispanic, Asian, mixed, decidedly undeclared, or whatever, to not give up, to keep working to make this country, this world, a better place.

The catalyst for me to write this was a series of tweets by Shaun King (@shaunking) several months ago. King has emerged as one of the leaders of #BlackLivesMatter, a movement to document and address racism in the USA, and especially focus on police misconduct and brutality. In those tweets, King noted his acceptance of a pessimistic view that racism is a permanent feature of American society. It’s not an unreasonable perspective, but it deeply saddens me. As a white husband of a black woman and father of biracial children, I desperately want to remain optimistic. I need to remain optimistic. My family lives between two worlds, and we can’t pick sides. In this post, I want to give some support for embracing a more optimistic perspective. But first, let’s establish why there is good cause to be pessimistic.

It’s been a hell of a few years for race relations in the United States of America. From Trayvon Martin through to the Charleston shootings to Sam Dubose and Corey Jones, black people have been disproportionately killed. Included in the body count are far too numerous instances of police misconduct and brutality. This is violence meted out by the state, and the individuals are disproportionately people of color. It’s been going on for years and it’s nothing new to the black community. Nearly ubiquitous video cameras and social media are now finally making it less easy for the wider community to ignore.

As sad, frustrating and angering as this all is, this moment presents a tremendous opportunity. To put it simply: systemic racism can’t be addressed effectively without white Americans being aware of it and acting to reduce it. Until recently, most white people in the country seem to have been living under the convenient but false perception that racism is a more or less a problem of the past. Now, white Americans see racism as a national problem, but generally don’t think it is a major problem in their own communities. In general, it seems white Americans tell themselves that perhaps there is some discrimination that we still need to address, but it’s not violent, really serious stuff. Maybe there are some backward people down south who are real racists, but by and large we’ve gotten past it, at least in our own communities. Unfortunately, that’s wishful thinking. Ignorance may at times be bliss, but that only really holds for the privileged. And, anyway, there are outright racist people, and they aren’t just in the south.

My wife is African-American. Our nine years together have been a crash course in race relations for me. There is so much I could never have guessed about the black experience in the United States without being with her. To learn at the age of five that there were people who wanted you dead because of your skin color, and furthermore, to learn this from a six-year-old friend. To wish at the age of seven that you are actually a white girl so that you could avoid the burden of being black (this is not uncommon, and Whoopi Goldberg has a powerful performance about it in her 1985 standup show Direct From Broadway). To hear your mom talk of seeing the severed head of a black man rolling down the street in the 1960s. To ask your husband not to stop in Vidor, Texas—even though you are in a traffic jam, pregnant and really needing to pee, because during college you saw “Nigger, don’t let the sun go down on you in this town” written on a wall there. To fear interactions with the police, even though you are a law-abiding, upstanding citizen with graduate degrees from Harvard and Yale. Just last week, she was driving down a road in Austin, at the speed limit, and a police officer in an SUV pulled up beside her, eyed her and matched her pace for some time—nothing happened, but it felt very threatening. Frankly, I didn’t really get her concerns about the police until last year. Now it is all too clear, and it was really driven home by what happened to Sandra Bland, right here in our home state of Texas, and in a city we often pass on our drive between Houston and Austin.

My wife and I have watched the events of the past year with sadness and horror. We have two bright and beautiful sons. Like any parents, we have huge dreams for them and want to set them up to live the happiest, most fulfilled lives they can possibly realize. Yet, we live in a country and time where not only black men and women are killed without justifiable cause or with extremely fast judgment, but even children like Trayvon Martin and Tamir Rice. Where a 14-year-old girl in a bikini is thrown to the ground and sat on by a police officer for several minutes—an officer who also pulled a gun out to threaten two other teens who were concerned for her and who swore repeatedly at other teens at the same incident in McKinney, Texas. (I wrote to the chief of police to ask that officer be dismissed.) Where Chris Lollie, a man waiting to pick up his kids in the St. Paul skyway, is apprehended without cause, tasered, and body searched by the police (and being polite didn’t help in the least). Where a 7-year-old boy is handcuffed for an hour for being unruly in class. It goes on and on—far to many to enumerate here. And because of all this, my wife and I frequently find ourselves watching and listening to the advice that many thoughtful people are giving about raising black children in the USA (e.g., Greta Gardner, Clint Smith, W. Kamau Bell). These are all concerns that were foreign to me and played no part in my own upbringing.

This July, my family took a vacation road trip from Texas to DC to Michigan and back. You learn a lot about the different parts of the US as a biracial family on such a trip. We nearly always stop at McDonald’s for bathroom breaks because we know there are cameras more consistently than in gas stations. We are quite accustomed to the hate stares directed at us, especially in poorer regions in the south. We also get disapproving looks from many black people, especially in black neighborhoods in cities like Houston and DC. Though it is usually just looks and stares, one white woman in a North Carolina rest stop loudly stated that she found our family “disgusting”. We planned our driving so that we wouldn’t have to stay the night in Missouri because of the recent racial tensions highlighted in Ferguson. (There is great irony in this, of course—our own state of Texas has its own poor track record with racism and police brutality, including recently the McKinney pool incident and Sandra Bland’s wrongful arrest and death and more.)

There was only one time on our trip that we felt real fear of more than looks and words. We were low on gas at one point and exited the highway to refuel, only to find the station we’d spotted was no longer operational—however, there were several trucks idling around this otherwise abandoned gas station. We immediately started to go, but our six-year-old declared he needed to pee, so I took him to the forest line—during which time more trucks started to show up. I hurried back as quickly as I could, and my wife had already hopped into the driver’s seat. We got out and back onto the highway fast. It may have been nothing, but it felt like something was possible. When I looked the location up later, I found out that it is a small township that hosts a chapter of the KKK. (I’m now definitely going to map the locations of such chapters out before we go on such a road trip again.)

So, we’ve thankfully only experienced mild discomfort as a family (my wife has experienced much more on her own, including being called a nigger by two white men in a car while walking on Harvard Square), but there is lots of stuff that is pretty bad going on out there. Shortly after our road trip, a similar biracial family on a long drive was stopped and cross-examined by police in a very intimidating manner. And there are plenty of people having rallies for the Confederate flag, and they don’t know their history, so let us admit is not about “heritage”. They even show up at kids’ birthday parties and threaten people. They definitely don’t seem to like black people.

So… where’s the room for optimism? My best guess is that the availability heuristic is playing a big role here, in multiple ways. If it is possible for you at this point, go read the book “Thinking, Fast and Slow“, by Daniel Kahneman, to learn about the availability heuristic and much more. But you probably can’t do that, so here it is briefly: the availability heuristic is a shortcut used by the human mind to evaluate a topic by using examples that are readily retrieved from memory. As an example, consider the question “is the world more violent today than it was in the past?” Perhaps a majority of people would respond yes—it is certainly easy to come to that conclusion if you watch the news. However, Steven Pinker carefully argues in his excellent book “The Better Angels of Our Nature: Why Violence Has Declined” that the data points convincingly toward the opposite conclusion. In fact, he spends a large portion of a large book to get the reader past their own sense of the problem as biased by the availability heuristic. As it turns out, there has is fact never been a time when the probability of a given individual dying violently has been lower. But sex and violence are what sell news, so that’s what we hear about. Then, when we consider the question, the availability heuristic brings those examples quickly to mind. It’s much harder to think about the billions of people just boringly living their lives. There are obviously many pockets of the world and our society where these trends are not as encouraging, so it isn’t time to sit back and say all is well.

It seems quite likely that when someone like Shaun King considers a question like “is racism a permanent feature of American society?”, examples like the ones I’ve mentioned above easily come to mind and dominate the mental computation. Frankly, it happens to me too—it gets me angry and upset and I find myself listening more regularly to artists like Killer Mike, The Roots and even going back to Rage Against the Machine. And, this is not to say “yes” isn’t the right answer. It is just to say that we need to consider the availability heuristic’s potential role in arriving at that answer. I believe we need more data and perspectives before we truly give up hope. The other thing is that it is notoriously hard to make predictions, especially about the future. As just one related example, I heard one family member lament—just a year before Obama’s candidacy—that we’d never have a black president.

As another example, consider American slavery in the decade before the Civil War. It would have been reasonable to feel that slavery would be a permanent feature of American society. In the concluding chapter of “The Slavery Question” from the 1850’s, the author, John Lawrence, writes:

Are there any prospects that the long and dreary night of American despotism will speedily end in a joyous morning?

If we turn our eye towards the political horizon we shall find it overspread with heavy clouds portentous of evil to the oppressed. The government of the United States is intensely pro-slavery. The great political parties, with which the masses of the people act, vie with each other in their supple and obsequious devotion to the slaveocracy. The wise policy of the fathers of the Republic to confine slavery within very narrow limits, so that it would speedily die out and be supplanted by freedom, has been abandoned; the whole spirit of our policy has been reversed ” and our national government seems chiefly concerned for the honor, perpetuation and extension of slavery.

Lawrence goes on to make further points of how dire the situation is, and quotes Frederick Douglass. But his book is called “The Slavery *Question*”, so he of course isn’t giving up. In fact, he flips it with excellent rhetorical flourish.

But dark as is this picture, there is still hope. The exorbitant demands of the slave power, the extreme measures it adopts, the deep humiliation to which it subjects political aspirants, will produce a reaction.

Inflated with past success it is throwing off its mask and revealing its hideous proportions. It is now proving itself the enemy of all freedom. The extreme servility of the popular churches is opening the eyes of many earnest people to the importance of taking a bolder position. They are finding out that it is a duty to come out from churches which sanction the vilest iniquity that ever existed, or exhaust their zeal for the oppressed in tame resolves, never to be executed.

The truth is gaining ground that slaveholding is a great sin, that slaveholders are great sinners, and that he who apologises for the system is a participator in the guilt and shame.

In other words, it’s a systemic problem, and not taking a position against slavery is to be complicit in its evils. In his concluding paragraph, he declares “The day of deliverance is not distant.” It took a bloody war, but a decade later, slavery was abolished.

And this brings us to what can be so frustrating about discussing current race relations with white Americans—namely that they have a very hard time discussing it. In fact, there is now a term, “white fragility,” that describes the odd sensitivity that nearly all white people have when discussing race. We just aren’t very good at it and it’s for a pretty obvious reason: we aren’t required to navigate race to function in our society, while any person of color must. There is also plenty of ambiguity to deal with since race itself is a social construct with very fluid boundaries, and a frequent white response is the well-intentioned but ultimately naive and counter-productive statement “I don’t see color”. One side of this leads to awkward, relatively harmless everyday encounters that can even be made light of — see “What if black people said the stuff white people say” (see also the videos for latinos and asians). But there is a deeper problem of systemic racial disparities that disproportionately benefit white Americans (for a very effective analogy, see this post comparing it to being a bicyclist on the road). The tricky nature of these benefits is that few white Americans realize and admit they are receiving them. They are working hard, dealing with their own successes, failures, pleasures and pains, and it sounds crazy to them that they are privileged. And in fact, this a natural conclusion to reach when you rely on the availability heuristic to consider the topic.

Another dynamic here is that so few white people have close black friends. I don’t mean your co-worker or a person you see from time-to-time. I mean deep personal connections that allow true sharing and sympathetic understanding of another person’s life and experiences. It’s not uncommon for a black American to be THE black friend for many white people, and they are probably keeping a good share of themselves out of reach. My wife learned to do that after even simple comments led some friends and acquaintances to go into conniptions. One man asked my wife “is the singing in black churches as good as they say?”, to which she responded “the singing is great in all the black churches I’ve been to.” He became hysterical and declared that this was a racist thing for her to say. She tried to continue the conversation by contextualizing it more specifically, saying she hadn’t been to every black church and every white church, and that she was just stating her own experience. He just became more irate, and it really seemed that he just wanted to validate his existing prejudices. After exchanges like this and many others like it, it’s often easier just to avoid racial topics altogether.

It is also just common for white Americans to lack deep experiences with black Americans. Until I started dating my wife, I also was similarly removed. I grew up in Rockford, Michigan. We had just a few black students in our high school and I didn’t know any of them. My eyes were opened to a number of things by listening to rap in the late 1980s, especially Willie Dee’s album Controversy, which included songs like “Fuck the KKK” (and many unfortunate misogynistic songs on the second side). My freshman college roommate at the University of Toledo was black and we got along great, but we didn’t hang out together much outside the dorm. I recognized that there were many problems for black Americans living in the inner cities, but I had little knowledge or appreciation for the day-to-day hurdles that black Americans faced regardless of their social status and location (often referred to as “paying the black tax”). It was never through any personal desire to be distanced, but it just didn’t happen until I fell in love with my amazing, wonderful wife in 2006. (Side note: we actually knew each other as students in Toledo in the 1990s. I had a crush on her, but considered her out of my league and didn’t do anything about it at the time. Doh!)

Much of the nation, it seems, expressed huge outrage about the killing of Cecil the Lion. At the same time, we had footage of a police officer shooting Sam Dubose in the head—and it hardly even seems to register outside the black community. I’m not setting up a false dilemma here: it’s fine to be upset about both killings; however, I’m highlighting the apparent higher proportion of the white population that is moved to express outrage by the former and what that says about priorities (especially when considering that much big game hunting is supporting nature preserves and endangered animal populations). Regardless, what I actually appreciate most about contrasting the two killings is how Cecil provided a platform for humorous, but serious, comparisons—most importantly, to highlight how every killing of an unarmed black person turns into an analysis of their character and actions and how those led or contributed to their being killed (as if it’s okay for police to be executioners). Doing the same for Cecil highlights the absurdity of this. Don’t forget that #AllLionsMatter, and can we also please have a serious discussion about lion on lion crime?

https://twitter.com/aamer_rahman/status/626551258250227712

In case it isn’t obvious, many of the common defenses of police violence meted on black Americans are not much different from blaming a rape victim because she wore a particular skirt, flirted too much, drank too much, was out too late, and so on. If you don’t believe me, go back and watch the videos of Chris Lollie, Sandra Bland, and Sam Dubose. Consider that for the latter two, the statements about the stops by the officers involved were contradicted by the video evidence. Then consider the many cases where people have died at the hands of the police and there was no video to check the veracity of their version of events—the police are always cleared of wrong doing. In the case of Sandra Bland, consider that there has been tons of focus on whether she committed suicide or was murdered, but let’s not forget it started with a completely ridiculous traffic stop. She should not have died in that cell because she should have never been there in the first place.

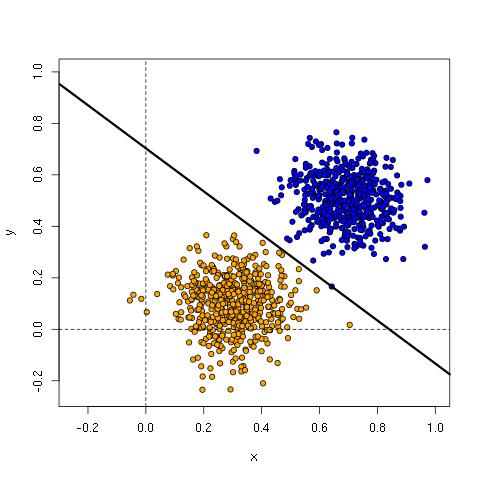

We need the police, but we need them to do their job right. That means to serve and protect all citizens, regardless of race, religion, sexual preference, etc. I hope that efforts in community oriented and evidence-based policing will start to improve matters. It makes a lot of sense, but the data is still inconclusive as to whether it actually reduces crime and improves public perceptions of the police. I’m also encouraged that many police departments are adopting data-driven methodologies that have the potential to help reduce racial profiling and identify problem officers. We must also analyze and evaluate the potential for both improved policing and even worse racial profiling that are offered by new algorithms—a topic I wrote about in my article “Machine Learning and Human Bias“. Getting policing into better shape in the country will nonetheless require sustained efforts such as Justice Together and Campaign Zero, and those have a greater chance of success if white people are agitating for change as well as black people.

My family at the Lincoln Memorial.

I am optimistic that we can get to a better place as a society. My family’s road trip brought us to Washington DC, and we went to the Lincoln Memorial. It’s a powerful place, especially for a family like ours. The words of Lincoln’s second inaugural address are on the wall. At that time, the nation was nearing the end of its greatest existential crisis, but Lincoln showed tremendous restraint and forward-thinking, concluding:

With malice toward none, with charity for all, with firmness in the right as God gives us to see the right, let us strive on to finish the work we are in, to bind up the nation’s wounds, to care for him who shall have borne the battle and for his widow and his orphan, to do all which may achieve and cherish a just and lasting peace among ourselves and with all nations.

They did it—they actually defeated slavery and kept the nation together. One-hundred and fifty years later, we are still working through the divisions created by that vile institution, including how we view that time and institution itself now. It’s hard, but we must remain optimistic as well as realistic.

It’s easy to feel overwhelmed by the scale of racism in the USA. But we can’t just throw up our hands. It’s not enough to be well-meaning and holding good intentions. All of us, black, white and more, must own our part of the solution. There is much that white Americans can do to understand and help. Talk to your kids explicitly about race and racism. My mom has gotten through to white friends who dismiss #BlackLivesMatter by talking about her black daughter-in-law and grandsons and how events impact them directly. Even if you have no strong personal connections to black Americans, you can start by reading books like “Between the World and Me” by Ta-nehisi Coates to get a better sense of what it means to grow up black in the USA. It’s the best book I’ve read this year. I particularly like it because he states things starkly, with no sugar-coating: he puts forth a grounded, atheist viewpoint that doesn’t romanticize. Coates discusses what is done to black bodies, not black spirits, hopes and dreams.

“The spirit and soul are the body and brain, which are destructible—that is precisely why they are so precious.” – Ta-nehisi Coates

The focus on the body allows him to dissociate the cultural from the perceived biological components of race, and remind us that white people aren’t white people, but are “people who believe they are white”. That’s an important, powerful distinction.

Actions such as legislation against racism (possibly limiting free speech) are not likely to improve things, and will likely make other things much worse. Support policies that seek to diminish our out-of-control prison system, which includes locations like Rikers Island and Homan Square where people have been held with out being charged, sometimes for years. These places breathe life into Kafka’s book “The Trial”, and they destroy actual lives. Perhaps some of the biggest payoffs on societal issues like racism is to support policies that truly improve educational and economic opportunities for all Americans (no easy problem, I know—the important thing is to realize this is surely more important than symbolic actions). The more that each of us, regardless of our background, can fulfill our potential, the better our chances of getting along better.

Black lives matter, and ALL our lives depend on that. Spread love, not hate, and work for justice and equality of opportunity for all. My family wouldn’t have been possible if others hadn’t done the same. Meaningful change generally takes a long time, but it can come relatively rapidly too. Consider that couples like my wife and I could not legally marry in Texas and many other states until 1967—just seven years before we were born (many thanks to the Lovings and others of their generation!). Consider that it wasn’t until 1993 that marital rape was illegal in all 50 states. Consider that gay couples could only legally marry each other in all 50 states, well, this very year.

We can do this. We must do this.